In more detail, if I force PyTorch to convert a tensor x to CUDA with x.cuda() I get the error: Found no NVIDIA driver on your system. However, PyTorch doesn’t seem to find CUDA: $ python -c 'import torch print(_available())' Lastly, I’ve installed PyTorch from scratch with conda conda install pytorch torchvision -c pytorchĪlso error as far as I can tell: $ conda list

Since this is also the version in the Ubuntu repositories, I simple installed the CUDA Toolkit with: $ sudo apt-get-installed nvidia-cuda-toolkitĪnd again, this seems be alright: $ nvcc -versionĬopyright (c) 2005-2017 NVIDIA CorporationĬuda compilation tools, release 9.1, V9.1.85Īnd $ apt-cache policy nvidia-cuda-toolkit |=|ĭriver version 390.xx allows to run CUDA 9.1 (9.1.85) according the the NVIDIA docs. | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr.

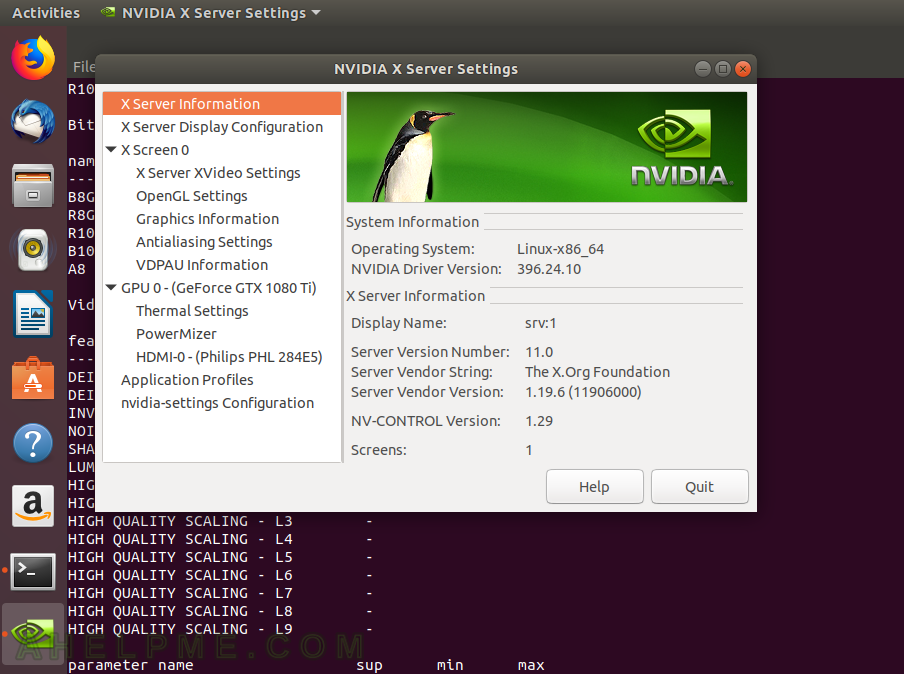

I also installed the latest NVIDIA drivers that are currently in the repository and that seems to be fine: $ nvidia-smi The NVS 310 handles my 2-monitor setup, I only want to utilize the 1080 for PyTorch. Both cards a correctly identified: $ lspci | grep VGAĠ3:00.0 VGA compatible controller: NVIDIA Corporation GF119 (reva1)Ġ4:00.0 VGA compatible controller: NVIDIA Corporation GP102 (rev a1) I’ve added an GeForce GTX 1080 Ti into my machine (Running Ubuntu 18.04 and Anaconda with Python 3.7) to utilize the GPU when using PyTorch.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed